How I delegate work to a team of AI agents

Building systems for delegating work to AI agents

Hey 👋,

Most of us are using AI coding agents the same way. You’re in the terminal, you’re very involved. You prompt, you review, you go back and forth. This is still the right way to work in many cases. We need to be in the loop to keep quality high and think through problems.

But if you’re working on smaller tasks like bug fixes or documentation updates, you generally don’t need to be in the loop. I think about this as delegating vs micro-managing. You just want to hand these off to an agent and trust that they’re going to do the work.

The problem is there aren’t many easy ways to do that right now.

So this week, I built a proof of concept solution to help with this problem.

Agent worker

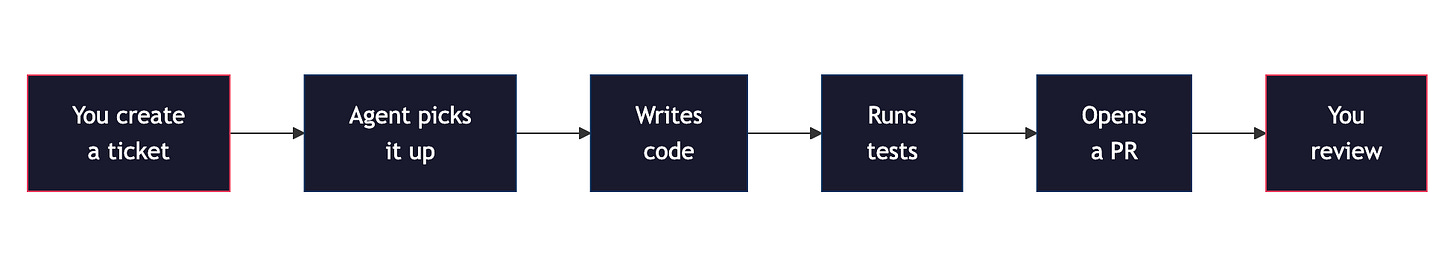

I built a simple TypeScript worker script that polls a task management system for tickets. When it finds one, it picks it up, delegates the work to Claude Code (headless), runs a series of checks, and opens a pull request. The ticket moves to “In Review.” I go and check the output.

I call this an agent control plane. Your tasks manager becomes your interface for delegating work to one or more agents.

I’m using Linear - but this works with Jira, Monday.com, or anything with an API.

Task managers are the right way to delegate this kind of work because if you have many things going on at once, you don’t want to be chatting with agents. You want a way to actually track what they’re doing. You’d do the same thing if you’re working in a team. You wouldn’t delegate work via chat. You’d have some kind of system to track it, especially if you’re working on hundreds of tasks.

Pull vs push architecture

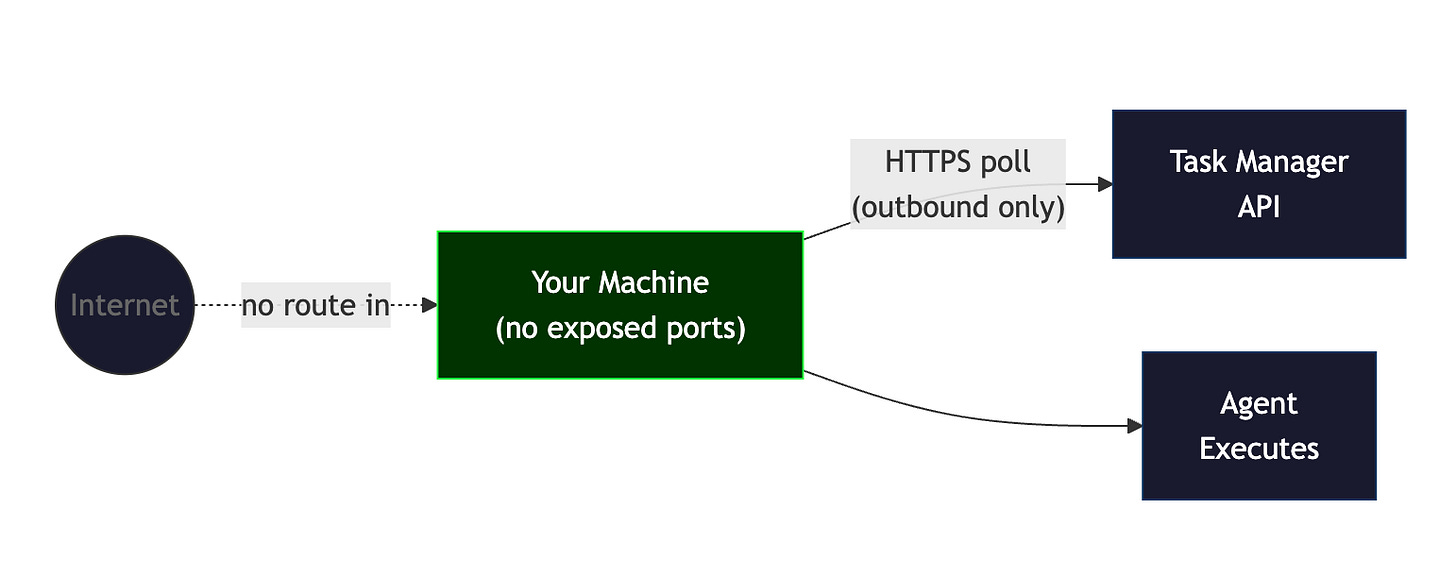

This is maybe the most interesting architectural decision. Push-based systems like OpenClaw use webhooks. You expose an endpoint, something hits it, the agent starts working. That means your agent runtime is reachable from the internet. Anyone can hit that endpoint.

A pull-based architecture is different. The agent worker makes outbound requests only. No need for open inbound ports. No exposed servers. If the worker goes down, tickets just sit in the queue until it comes back. The only trade-off is latency. If it takes 60 seconds to pick up a ticket, that’s fine. We don’t care about latency for this kind of work.

For a system where you’re giving an AI agent write access to your codebase and the ability to open PRs, we want the smallest possible attack surface. Polling gives you that.

Deterministic guardrails around non-deterministic agents

One of the challenges when you’re delegating to agents this way is you can’t really do an iterative process. You need it to work in one shot and you need full permissions. This is challenging because more often than not agents make mistakes on their first attempt.

So we wrap the non-deterministic part (the agent writing code) with deterministic checks on both sides.

Pre-hooks run before the agent starts. Check out a worktree, git pull, make sure the environment is clean. If any of that fails, the agent doesn’t start.

Post-hooks run after the agent finishes. Run tests, run linting, push the code. These are just shell commands.

The workflow inside the agent is also structured to compensate for the lack of back-and-forth. Write the code, run the tests, then run a code review with CodeRabbit to look for errors, fix anything it finds, and run the tests again. This reduces the number of iterations you need.

When solving a ticket:

1. Write the code to solve the ticket

2. Run `bun test` and fix any failures

3. Review your changes for code quality. Use CodeRabbit if available

4. Fix any issues found in the review

5. Run `bun test` again to confirm fixes didn't break anythingAutomated code review

When you’re not sitting in the terminal reviewing the code, you need some kind of automated system to do that for you. I use CodeRabbit. It’s an AI code review tool that integrates with Claude Code and also runs on GitHub when a PR is opened. So every PR the agents open gets reviewed automatically before I even look at it.

It doesn’t catch everything. But the PRs I end up reviewing have already been through linting, tests, and an AI code review. The obvious stuff is already handled.

Scaling agents

What I like about this simple approach is that it scales well.

You can start with one worker running on your laptop. But you can also run multiple workers on different machines, on a VPS, wherever. Same delegation process, more throughput.

I showed this in this video with two workers picking up two tickets at the same time and completing them in parallel.

The code is mostly a proof of concept to demonstrate the idea. What’s interesting here is the architecture, not necessarily the code. Pull-based delegation with deterministic guardrails around non-deterministic workers. That pattern holds regardless of which tools you use.

Full walkthrough with the demo and all the code is in the video:

Thanks for reading. Have an awesome week : )

P.S. If you want to go deeper on building AI systems, I run a community for people interested in these topics: https://skool.com/aiengineer